Continuous Integration Essentials

This is a short text about continuous integration. It serves as a companion to a lightning talk I do on the subject. In this text I will first try to define it, then move on to a short discussion and finally end with some encouragement and perhaps call to action.

When defining the concept "continuous integration" it is easy to use a bit too many words. I started to think along the lines of "to verify as much as possible as often as possible" but it was a little bit too noisy. After several iterations I decided on the dense:

verify often

It is small enough to not be a formal definition. Formal definitions are often a bit far away from the real thing. This short form can also be remembered easily. So continuous integration is for this article defined as "verify often". Lets have a look at what we can verify. What first springs to mind is to check that the code base is fine. With a static language the first thing to do is to compile the code so that syntactic errors are found. After that unit tests can be run to verify the semantics of the code. With a dynamic language the syntax is verified implicitly with the unit tests. They will simply not run if there are syntactic errors in the code. With this in place we move on to automatic installation of the system in an environment that resembles the target environment and then perform integration and system tests. (And whatever you call your different test levels in your organization.) Almost all kinds of tests are possibly to automate. The exception of course is the visual look and feel of a graphic interface.

With testing in place and a sense of security that most bugs would be detected in any of the different test suites we can move on to automated deployment. For several kinds of systems it is possible to continuously deploy into production. For other kinds it is not possible at all.

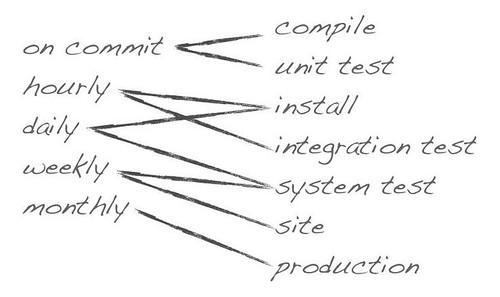

So how often is it possible to verify at all these levels? It would be great to do it all on commit and it is indeed possible for smaller systems. The sooner word gets back to the developer that something is wrong the better. It will be fresh in his/her mind and it is often easily fixed. Bugs that are detected after several weeks or even months are much harder to track down and correct. Examples on how different verifiables have different rythm are depicted in the following diagram.

Keep in mind that this is just an example. As said earlier it is good to push as much verification as possible as close to the code creation as possible. To let this be a design force is actually good for a systems architecture in the long run. You will end up with loosely coupled system parts.

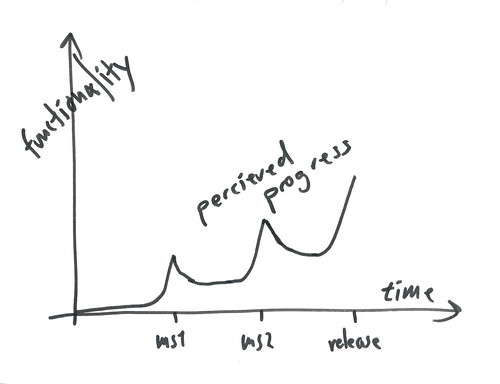

There are several common objections to continuous integration. The common ones are either about expense or hard-to-test systems. This is definitely valid objections to make but I am sure that you have heard about them before so let me give the discussion part another twist instead. I have played around with a couple of graphs to illustrate the benefits of continuous integration. The first one shows how a developer typically views his work:

Work starts with the code, functionality increases over time and finally a release is made. This is - of course - almost never true but many a developer probably have this view nevertheless and finds ways to blame the surrounding for any shortcomings. Maybe other developers were not that good. Or the specs were incorrect. It is always easy to project the blame somewhere else when working in a complex software project.

Consider the following graph instead. It is the view product owners and managers get for a typical software project.

As you can see the software is only transparent at iteration ends. This is when something is released for testing. The armada of test personnel - that typically spend most of their days planning (tremendous waste of resources that) - now gets the chance to test and - if lucky - the project is on track and the next iteration commences. In worse cases the software coming out of the first iteration is completely useless and the project is late from the beginning.

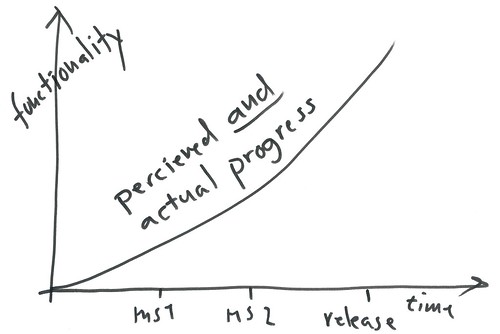

Apparently there is a big gap between developers view of the world and manager type peoples corresponding view. The good news is that the power is with the developers. If they want to they can make sure that continuous integration happens and that managers get good insight into the actual progress. (Managers typically don't understand that continuous integration actually are a very good thing for them.) And make the world look a bit more like this.

Nirvana - with continuous integration the actual and the perceived progress is the same. It is a good situation for everyone involved. And the good news again is that developers are needed to make it happen. Automation is basically what developers do all the time and continuous integration is just another kind of automation.

Finally a moment of encouragement with nice pics and all. In many settings the task of setting up a nice continuous

integration environment may seem like an impossible task. It may actually be the case that it is not possible to get a centralized place for automation in some organizations. I sure have worked at such places myself. This is not however an excuse to do nothing at all. There are always something that can be done. With a bit of cron and a bit of shell script you can have your own hand made continuous integration server. Set up a bit of compiling or a bit of automated system environment installing. Let it sink in and get acceptance - then move on and automate something else. Set up a hudson instance on your own machine and show it to your peers. They might shrug their shoulders but at least you tried.

integration environment may seem like an impossible task. It may actually be the case that it is not possible to get a centralized place for automation in some organizations. I sure have worked at such places myself. This is not however an excuse to do nothing at all. There are always something that can be done. With a bit of cron and a bit of shell script you can have your own hand made continuous integration server. Set up a bit of compiling or a bit of automated system environment installing. Let it sink in and get acceptance - then move on and automate something else. Set up a hudson instance on your own machine and show it to your peers. They might shrug their shoulders but at least you tried.Sometimes it feels that we work in organizations from the 19th century. The thinking and the doing has stayed along from a distant time. Stuff are being done and not done because of tradition rather than for good reasons. This is not an excuse to not be awesome. Your own work could always be of the highest quality regardless of what low and strange expectations there is in the organizations. Unit testing can be a part of your way to develop even if there is "no way unit tests gets into the version control system". Just do it anyway. Eventually someone will notice that your code comes with quality compared to others and that it doesn't slow you down. And sooner or later you will leave the place anyway so better leave it with some great practices in place.

Finally some words about our managers. Keep in mind who is paying and who is doing the fun work. Let them get a great view on the progress in exchange for paying for your work. Shouldn't be that hard should it?

The talk that accompanies this post was first held at javaforum Stockholm on the 10th of November and will be held at devoxx on the 18th. Future engagements with this talk will be posted in this space (as well as on twitter).

Old comments

2010-11-15Hardy Ferentschik

You could argue that this should be the job of QA, but what if the QA team sucks? Why not being proactive and become the authoritative person in your company when it comes to continuous integration?